Should you write about real goals or expected goals? A guide for journalists.

From a statistical point of view the result of a football match is almost as much noise as it is signal. This is a fact that is very difficult to square with detailed match reports written in newspapers and the analysis on Saturday night TV. But it is, nonetheless, a fact. A single match result from the Premier League provides only a small amount of insight in to how good the two teams that played actually are.

A mathematical explanation of this can be found directly from the Poisson distribution. Goals in football are Poisson distributed and teams score about 1.4 goals on average. The variance and the mean are equal in the Poisson distribution. So the standard deviation is the square root of 1.4, which is 1.18. Thus the noise (1.18) is only slightly smaller than the signal (1.4). QED.

If you don’t believe the maths, then just think about the Manchester City and Arsenal victories on Sunday, respectively scoring in the 84th and 98th minute to win. The small number of goals means that matches are decided by narrow margins. The signal (the strongest team) is only slightly stronger than the noise (the fact that anything can happen). QED.

This makes it very difficult to write sensibly about football. People are interested in the latest results, and it would be very boring indeed if journalists wrote “well it was mostly noise”.

It is here that expected goals offer a glimmer of hope. Expected goals are a measure of chances created, and thus give a better measure of the quality of a team during a single match than goals. They typically contain less noise and more signal.

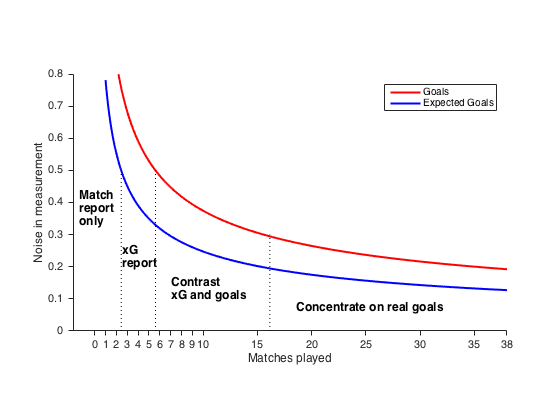

What I want to do now is give a rule of thumb for using expected goals in football writing. The graph below shows how the magnitude of the noise in the measurement performance decreases with the number of matches played in season, along with some recommendations of what sort of things to write.

1 or 2 matches: At the start noise is high for both expected goals and goals. I suggest that when discussing one or two matches to stick to ‘Match report only’, write about what happened, tactics adopted, players movement etc. and speculate less about what this means about the team in the long term. There is no trend to be seen here, even in expected goals. Pundits saying “they won the match on expected goals” has no more meaning than the scoreline itself.

3 to 6 matches: I have written before about how 3 to 6 matches start to give us a clear picture of a team only if they consistently lose or win. It is here an ‘xG report’ can work very well. The noise in xG is now less than 0.5 goals per match, giving us more insight than goals. If xG and goals tell a different story then it is now you should tell the reader that a team might not be quite as bad (or good) as they seemed.

7 to 16 matches: This is the most exciting time for xG jornalism. Now goals are becoming a more reasonable measurement of performance. It is difficult to sustain a 10 match lucky streak and you don’t really expect to have a 10 match bad run unless the team is poor. So if xG and goals contradict each other then you have a real story on your hands: ‘contrast the two approaches’. Burnley are this seasons’ example. They have very low xG, but have got some pretty good results. It is worth trying to find out, as Rory Smith did for NYT and I did for Nordic bet, what the explanation might be.

17 matches: I once told a club analyst, whose team had over-performed xG for a whole season, that this was probably because his team was actually good. I’m not sure he was convinced, but he should have been. After 16 matches, the difference between the noise in xG and the noise in real goals is only 0.1 goal per match. This is a small difference, and most importantly, it is the size of difference at which we can’t discount that the xG model is wrong for a team. Expected goals are a mathematical model. Models aren’t reality. Goals are reality. So if a team has a player, a manager or a mentality that gives them an extra edge, a 0.1 goal per game edge, then this will be better picked up in real goals than in expected goals. This is why expected goals tables become less relevant or interesting as the season progresses. So ‘concentrate on real goals’.

That’s the rules. They are by no means failsafe, but it should be a helpful guide.

Not everyone agrees with me. I had one discussion on Twitter at the end of the 2015–16 season with someone who thought that xG were better than goals in all situations. I don’t think that can hold if we remember that xG models are averages over all teams in recent seasons. Teams differing by 0.1 goal per game from that model isn’t particularly drastic. So over a season the final table is the best estimate we have of a team’s performance.

Statsbomb’s editor James Yorke is careful that the articles externally contributed to the site only reflect long-term trends. No match reports or one off stats, making it is always a good read. But I have noticed that, on Twitter, James typically takes a more conservative view than I do about the 7–16 match window. He appears to trust xG and other shot stats further in to the season, when deciding what is worth considering a true ‘trend’. It was a recent ‘Burnley are lucky’ tweet that spurred me in to action with this article. I would argue that Burnley are probably over-performing slightly, but after 13 matches played they are in that interesting area where they deserve an explanation.

A couple of additional points. Firstly, if a manager changes, a key player is injured, or some other major event occurs at the club that seems to affect the club then these same rules apply from the point of the event. Three games after the event, it is time to look at the xG story. A year on and it is real goals that tell the story. Secondly, for evaluating individual players over a season xG can be useful, since the standard deviation for a player relative to xG can be even larger. Here both goals and xG are useful.

Happy writing.

Where did these curves come from?

The red curve for measurement error (noise) for goals is based on a team that scores 1.4 goals per match. I assume that the error is proportional to the square root of 1.4/n, where n is the number of matches. It is this curve that is plotted as noise in measurement. There are at least 19 ways of measuring the confidence interval for the Poisson distribution, and the one I choose here is quite simple. As n increases it becomes more reliable.

The blue curve for measurement error (noise) for expected goals is based on the average sample variance for expected goals across Premier League teams so far this season. For the model I use I found that to be 0.61. As for goals, I then assume that the error is proportional to the square root of 0.61/n, where n is the number of matches. It is this curve that is plotted. The estimate of 0.61 is by no means perfect and the reader is free to try their own values and see how the results are affected.